Zeppelin notebooks are a web based editor for data developers, analysts and scientists to develop their code (scala, python, sql, ..) in an interactive fashion and also visualize the data.

I will demonstrate simply notebook functionality, query data in hive tables, aggregate the data and save to a new hive table. For more details, read https://hortonworks.com/apache/zeppelin/.

My HDInsight Spark 2.0 cluster is configured for Azure Data Lake Store as the primary data storage. The Zeppelin notebook has to be installed by going to the Add Service Wizard. http://docs.hortonworks.com/HDPDocuments/HDP2/HDP-2.5.3/bk_zeppelin-component-guide/content/ch_installation.html

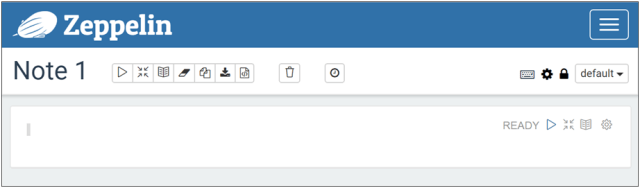

To start off, create a new note.

One empty paragraph where you can write a code and has its results section to display error message and outputs. A note can have many paragraphs and share the same context. For more details read https://zeppelin.apache.org/docs/0.6.1/quickstart/explorezeppelinui.html#note-layout

Show tables in the usdata database. Hit shift+enter to run the paragraph or hit the play button on the top right.

I create another paragraph with code to query a hive table, display the first 2 rows and the number of records.

%livy.spark declares the interpreter. The programming language is Scala.

crimesDF is a data frame object which contains the data in named columns according to the schema defined in the hive table it is being queried from. For details read http://spark.apache.org/docs/latest/sql-programming-guide.html#datasets-and-dataframes

The number of records counted is 6,312,976. The execution took about 21 seconds of which I consider quite fast.

In this paragraph,

- a query upon the crimesDF data frame created in the previous paragraph. crimebytypeDF will contain an aggregation of the number of crimes by its primary type and year.

- This result will be saved into a new hive table using saveAsTable function.

- Show the first two rows for testing

- Convert data frame to RDD for future scenarios to apply sophisticated transformations. RDD loses the named column support in data frames.

- Print the first 5 rows in the RDD for testing.

This execution took 1 minute and 52 seconds which is again very fast.

The full code:

%livy.spark

spark.sql("Use usdata")

spark.sql("Show tables").show()

val crimesDF = spark.sql("SELECT * FROM crimes")

crimesDF.show(2, false)

crimesDF.count()

val crimebytypeDF = crimesDF.select($"id", $"year", $"primarytype").where($"year" < 2017).groupBy($"primarytype", $"year").

agg(countDistinct($"id") as "Count")

// save data frame of results into an existing or non-existing hive table.

crimebytypeDF.write.mode("overwrite").saveAsTable("crimebytype_zep")

// display first 2 rows

crimebytype.show(2, false)

// convert data frame to RDD and print 5 first rows. RDD allows for more control on the data for transformations.

val crimebytypeRDD = crimebytypeDF.rdd

crimebytypeRDD.take(5).foreach(println)

I have shown a brief and basic example of using the Zeppelin notebook and to manage data in hive tables for ad hoc data analysis scenarios. I like the fact that queries and operations are much faster than in Hive View in Ambari and in Visual Studio with Azure Data Lake Tools plugin. I like the notebook ‘paragraph’ concept as I can run small chunks of code rather than through separate files or by commenting and uncommenting lines of code. I would start off writing the core logic of my big data applications in notebooks and bring them into IntelliJ or visual studio where it would be more suitable for larger scale application development.