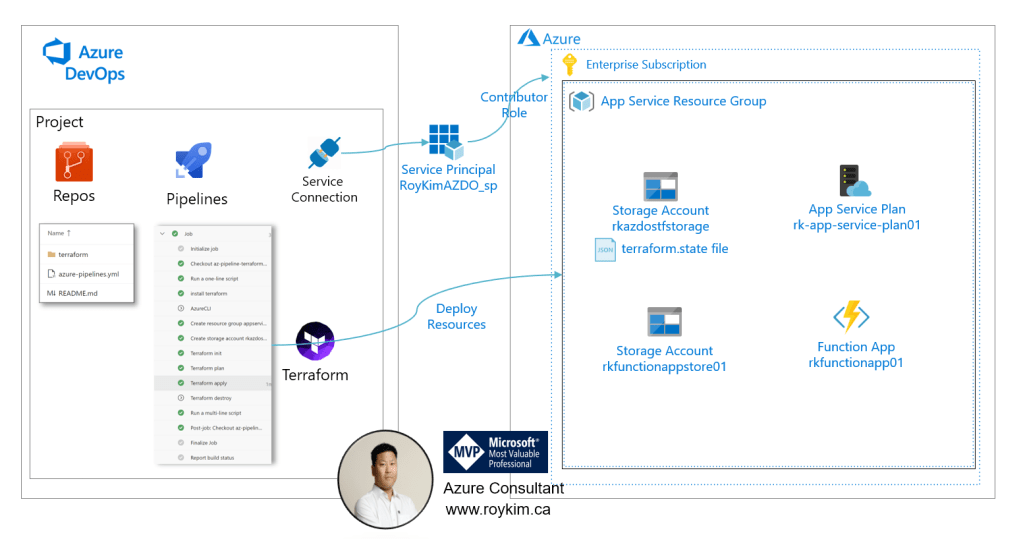

I will give a walk through of a simple starter approach to building an Azure Devops Pipeline to deploy an Azure resource in Terraform. I will deploy an Azure Function as an example.

By automating, you promote consistency, repeatability, collaboration, overall productivity and more efficiency in operating your Azure infrastructure.

Assumptions

Demo Design

- AZDO Repo

- pipeline

- terraform module

- Azure

- Subscription

- Resource Group

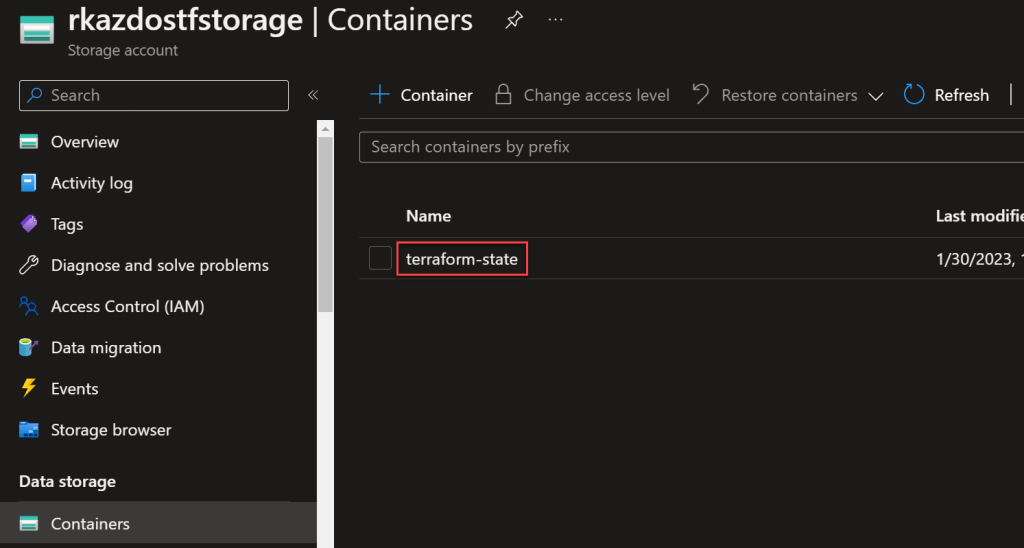

- Storage account for terraform backend state file(s)

- Azure App Plan

- Azure Function App

- Storage account for app plan

- Service principal for AZDO service connection to Contributor role to the subscription

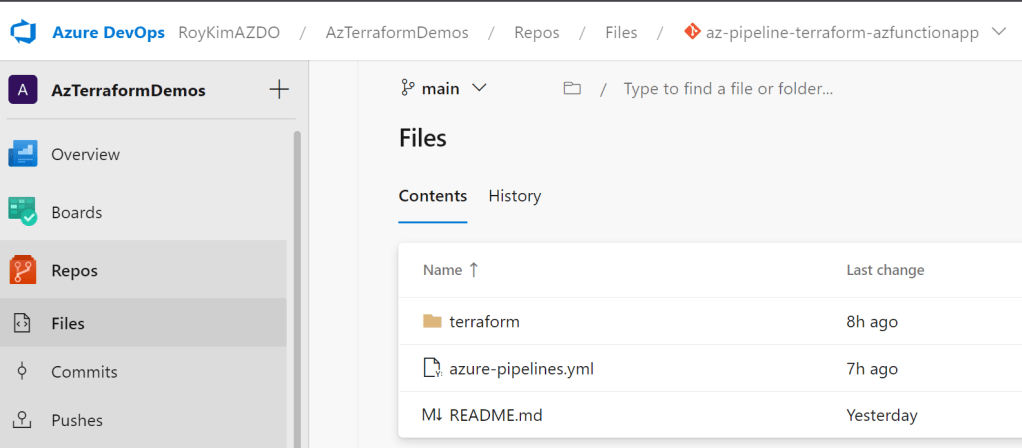

- I created a new project as such

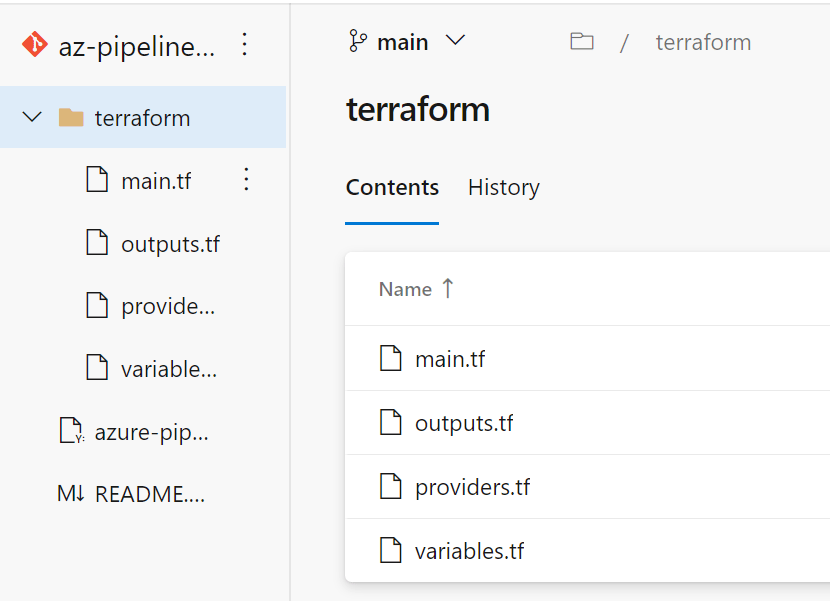

2. My AZDO repo contains a starter YAML pipeline and I had created a sub folder called terraform.

YAML pipelines provide a more efficient and scalable way to manage your build and release pipelines in Azure DevOps.

In a YAML pipeline, you define the tasks, steps, and stages of your pipeline in a YAML file, and then check it into your source control repository. The terraform folder is to create your terraform script that the pipeline will use to execute.

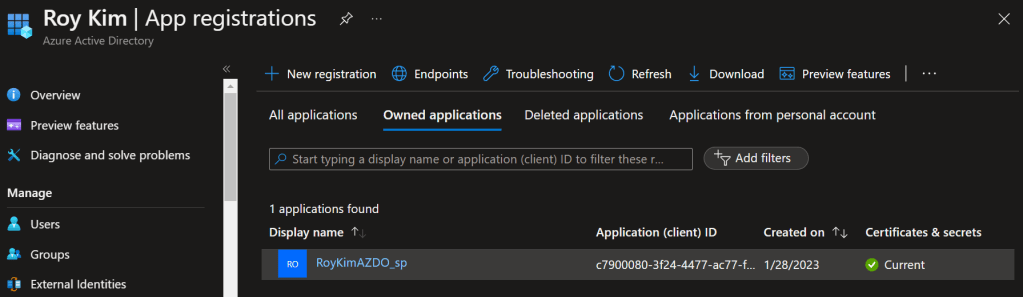

3. In order for your pipeline to create resource into your azure subscription, you need to create an Azure service principal that has the permissions to create appropriate resources such as resource group, storage account and azure function app.

An Azure AD service principal is a security identity that represents an application or service in Azure and enables it to interact with Azure resources in a secure and controlled manner. It is used to authenticate and authorize applications and services that need to access Azure resources, and to manage their permissions and access to those resources.

I created a service principal to be used by Azure Devops Service Connection by going to Azure AD > App registrations

When creating, take note of the client ID and client secret as this is the password as it is used in creating the AZDO service connection.

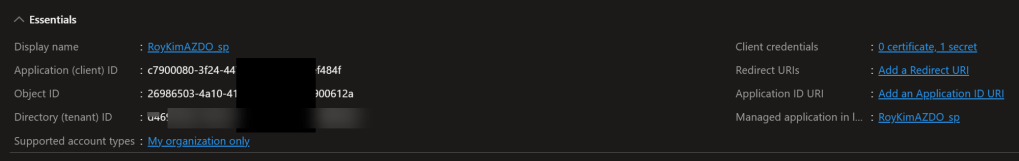

Clicking into the details you can see the following:

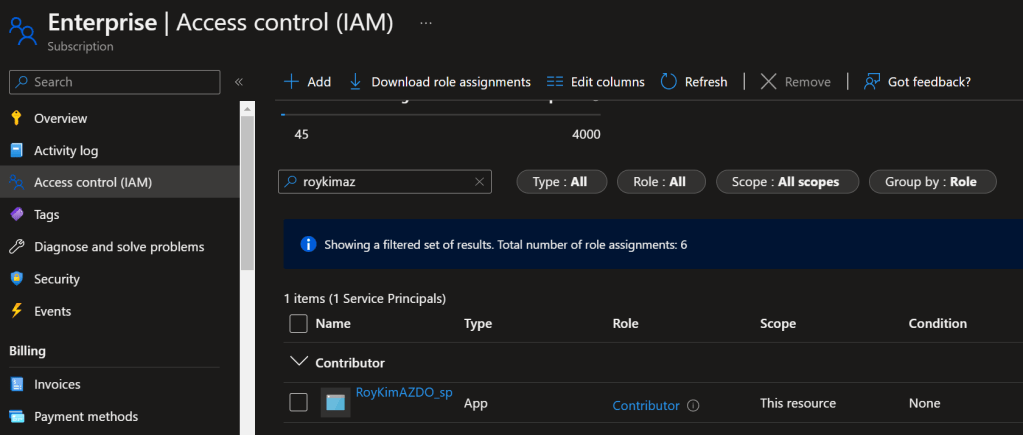

Next, in my demo, I have granted this service principal the Contributor role to the subscription. This will enable the service principal to create any azure resource. As a security best practice, you should adopt the least privilege principal by providing specific Azure AD RBAC roles.

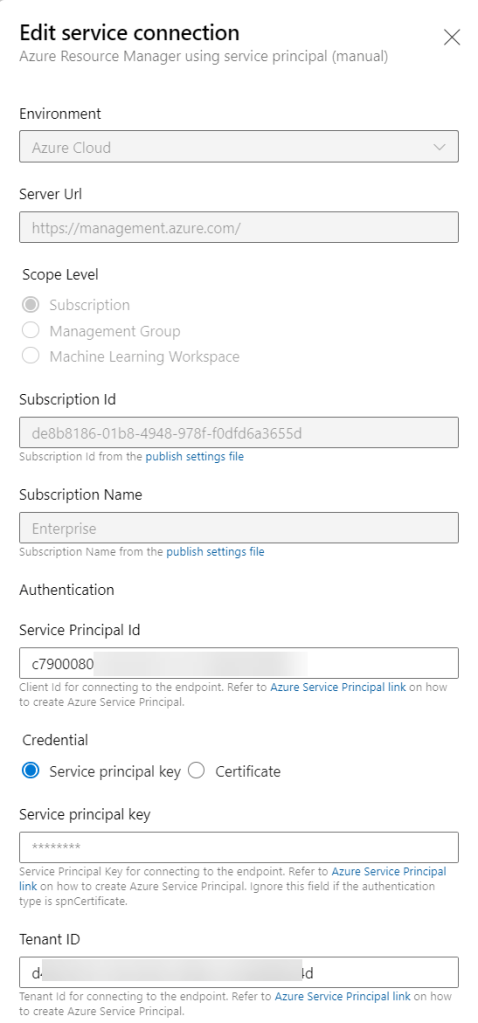

5. You need a service connection that the pipeline will refer to so that various pipeline tasks can execute.

In project settings > Service Connections

I provided these properties

Note that another option is to use Azure Managed Identities as you don’t need to worry about the client secret being expired. But this does not work with AZDO pipelines in the MS hosted build agent as I am using in this demo. Managed Identities will work in self hosted agents in Azure VMs in your subscription.

5. I have prepared these terraform files which are used by the pipeline.

You can develop these in visual studio code by using running them locally with terraform command lines such as terraform init, terraform plan and terraform apply. Or you can develop and test these through building your pipeline by calling those same terraform commands via pipeline tasks.

Here are the terraform file contents:

providers.tf

terraform {

required_version = ">=1.0" # https://github.com/hashicorp/terraform/releases

required_providers {

azurerm = {

source = "hashicorp/azurerm"

version = "~>3.0" # https://registry.terraform.io/providers/hashicorp/azurerm/latest

}

random = {

source = "hashicorp/random"

version = "~>3.0"

}

}

# If a configuration includes no backend block, Terraform defaults to using the local backend, which stores state as a plain file in the current working directory.

backend "azurerm" {

}

}

provider "azurerm" {

features {}

}

variables.tf

variable "environment" {

type = string

}

variable "resource_group_name" {

type = string

}

variable "location" {

type = string

default = "canada central"

}

variable "functionapp_storage_account_name" {

type = string

}

variable "azurerm_windows_function_app_name" {

type = string

}

main.tf

data "azurerm_resource_group" "rg" {

name = var.resource_group_name

}

resource "azurerm_storage_account" "example" {

name = var.functionapp_storage_account_name

resource_group_name = data.azurerm_resource_group.rg.name

location = var.location

account_tier = "Standard"

account_replication_type = "LRS"

}

resource "azurerm_service_plan" "example" {

name = "rk-app-service-plan01"

resource_group_name = data.azurerm_resource_group.rg.name

location = var.location

os_type = "Windows"

sku_name = "Y1"

}

resource "azurerm_windows_function_app" "example" {

name = var.azurerm_windows_function_app_name

resource_group_name = data.azurerm_resource_group.rg.name

location = var.location

storage_account_name = azurerm_storage_account.example.name

storage_account_access_key = azurerm_storage_account.example.primary_access_key

service_plan_id = azurerm_service_plan.example.id

site_config {}

}

6. Before developing the pipline yaml file, since I am running terraform tasks, I need to install the Visual Studio Extension from the marketplace found at https://marketplace.visualstudio.com/items?itemName=ms-devlabs.custom-terraform-tasks

7. The YAML pipeline was developed as follows and see the inline comments for educational commentary.

# Starter pipeline

# Start with a minimal pipeline that you can customize to build and deploy your code.

# Add steps that build, run tests, deploy, and more:

# https://aka.ms/yaml

trigger:

- main

pool:

vmImage: ubuntu-latest

# Define variables

variables:

- name: environment

value: dev

- name: location

value: canadacentral

- name: subscriptionId

value: d<redacted>

- name: serviceConnectionName

value: 'Enterprise Az Subscription'

- name: resource_group_name

value: appservice

- name: storage_account_name

value: rkazdostfstorage

- name: functionapp_storage_account_name

value: rkfunctionappstor01

- name: azurerm_windows_function_app_name

value: rkfunctionapp01

# Part of the Starter YAML pipeline template and is good example for debugging.

steps:

- script: echo Hello, world!

displayName: 'Run a one-line script'

# Install Terraform

# Since using MS hosted agent, need to install the terraform and indicate the version.

- task: TerraformInstaller@0

displayName: install terraform

inputs:

terraformVersion: latest

# Configure Azure Provider

# This is optional but just for debugging purposes and test the AzureCLI task

- task: AzureCLI@2

condition: false

inputs:

azureSubscription: $(serviceConnectionName)

scriptType: 'pscore'

scriptLocation: 'inlineScript'

inlineScript: |

az account show

# Need to create a resource group for the solution to be deployed. At the minimum need a resource group that is containing the essential storage account for the terraform statefile.

- task: AzureCLI@2

displayName: 'Create resource group $(resource_group_name)'

condition: false

inputs:

azureSubscription: $(serviceConnectionName)

scriptType: 'bash'

scriptLocation: 'inlineScript'

inlineScript: |

az group create -n $(resource_group_name) -l $(location)

# A storage account is needed to store the terraform statefile. A Terraform state file is a # file that Terraform uses to store the state of the resources that it manages. The state

# file keeps track of the current state of the infrastructure, and Terraform uses this

# information to determine the changes that need to be made to the infrastructure to

# bring it to the desired state.

# By placing it in a storage account, promotes collaboration so that other devops engineers can update and run the terraform code.

- task: AzureCLI@2

displayName: 'Create storage account $(storage_account_name) for terraform state files'

condition: false

inputs:

azureSubscription: $(serviceConnectionName)

scriptType: 'bash'

scriptLocation: 'inlineScript'

inlineScript: |

az storage account create -n $(storage_account_name) -g $(resource_group_name) -l $(location) --sku Standard_LRS

# Initialize Terraform

# This initializes ensuring that Terraform has the necessary information to manage the # infrastructure, including the plugins for the providers that you are using, the state of

# your resources, and the backend to store the state file.

- task: TerraformTaskV2@2

displayName: 'Terraform init'

inputs:

command: 'init'

provider: 'azurerm'

workingDirectory: '$(System.DefaultWorkingDirectory)/terraform'

backendServiceArm: '$(serviceConnectionName)'

backendAzureRmResourceGroupName: '$(resource_group_name)'

backendAzureRmResourceGroupLocation: '$(location)'

backendAzureRmStorageAccountName: '$(storage_account_name)'

backendAzureRmContainerName: 'terraform-state'

backendAzureRmKey: 'terraform.tfstate'

commandOptions: '-lock=false'

# The terraform plan command takes your Terraform configuration as input and

# compares the desired state specified in the configuration to the current state stored in # the Terraform state file. It then generates an execution plan, which is a summary of

# the changes that Terraform will make to your infrastructure in order to bring it in line # with the desired state specified in the configuration.

- task: TerraformTaskV3@0

displayName: 'Terraform plan'

inputs:

command: 'plan'

workingDirectory: '$(System.DefaultWorkingDirectory)/terraform'

environmentServiceNameAzureRM: '$(serviceConnectionName)' # Service connection or to override the subscription id defined in a Subscription scoped service connection

commandOptions: '-var "environment=$(environment)" -var "resource_group_name=$(resource_group_name)" -var "location=$(location)" -var "functionapp_storage_account_name=$(functionapp_storage_account_name)" -var "azurerm_windows_function_app_name=$(azurerm_windows_function_app_name)" -input=false'

# The -input=false option indicates that Terraform should not attempt to prompt for input, and instead expect all necessary values to be provided by either configuration files or the command line

# Provisions the specified resources in your infrastructure, updates existing resources

# as necessary, and deletes any resources that are no longer specified in your Terraform # configuration.

- task: TerraformTaskV3@0

displayName: 'Terraform apply'

inputs:

command: 'apply'

workingDirectory: '$(System.DefaultWorkingDirectory)/terraform'

environmentServiceNameAzureRM: '$(serviceConnectionName)' # Service connection or to override the subscription id defined in a Subscription scoped service connection

commandOptions: '-var "environment=$(environment)" -var "resource_group_name=$(resource_group_name)" -var "location=$(location)" -var "functionapp_storage_account_name=$(functionapp_storage_account_name)" -var "azurerm_windows_function_app_name=$(azurerm_windows_function_app_name)" -input=false'

# Delete the azure resources under terraform management. This has a conditional flag to manually enable when need.

- task: TerraformTaskV3@0

displayName: 'Terraform destroy'

condition: false # disable destroying

inputs:

command: 'destroy'

workingDirectory: '$(System.DefaultWorkingDirectory)/terraform'

environmentServiceNameAzureRM: '$(serviceConnectionName)' # Service connection or to override the subscription id defined in a Subscription scoped service connection

commandOptions: '-var "environment=$(environment)" -var "location=$(location)" -var "functionapp_storage_account_name=$(functionapp_storage_account_name) -var "azurerm_windows_function_app_name=$(azurerm_windows_function_app_name)" "'

- script: |

echo Finished AZDO pipeline demo execution

echo See https://aka.ms/yaml

displayName: 'Run a multi-line script'

Upon running the pipeline we get:

This is the storage container and the terraform state file in the storage account container that stores the current state of an infrastructure managed by Terraform. It contains information about the resources that Terraform has created, such as the type of resource, its ID, and its current state.

Here’s a snippet of the file contents.

Here are the resources that have been created by the pipeline.