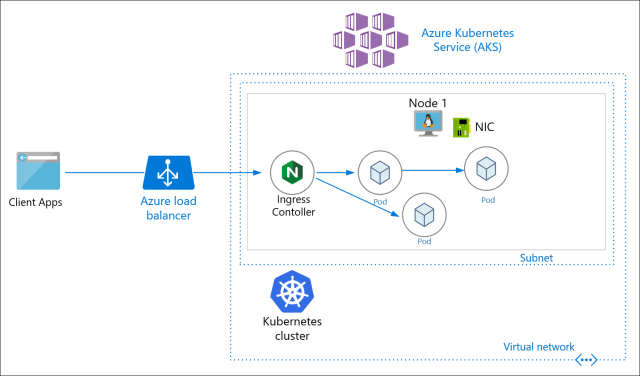

In this 2nd configuration profile, I will walk through the resulting configuration of AKS and its effect on the Load Balancer, Virtual Network, VM network interface card, deploy and test a web application into the Azure Kubernetes Service (AKS) cluster. The configuration profile is mainly around the Azure CNI network model.

Please read the Part 1 intro of this blog series if you haven’t already. This will explain the background and what each configuration setting means. Here is the previous blog post for Part 2 Kubenet.

Configuration Profile 2

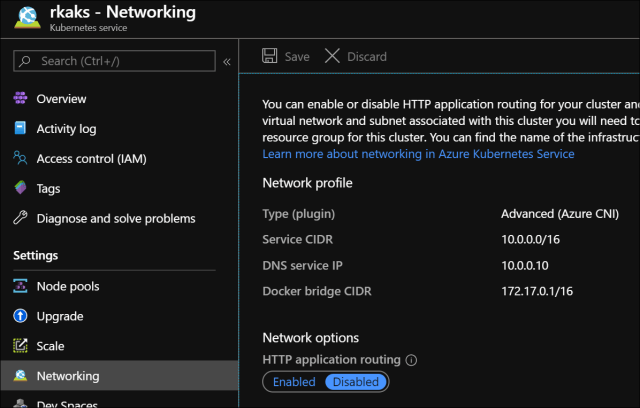

- Network Model/Type: Advanced (Azure CNI)

- Http application routing: Disabled

- Virtual Machine Scale Set: Enabled

2 VM instances in the scale set

AKS Networking settings

Resources in the AKS Infrastructure Resource Group

Virtual Machine Scale Set (VMSS)

In this screenshot, I just happened to stop one VM instance in the scale set. This is just arbitrary and has no purposes for this article.

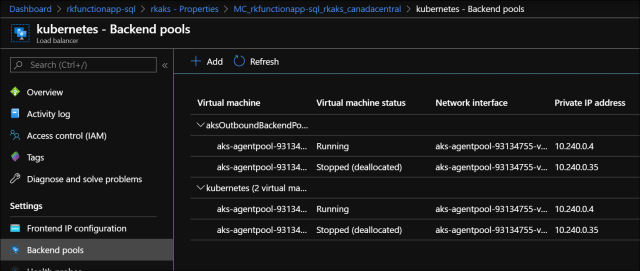

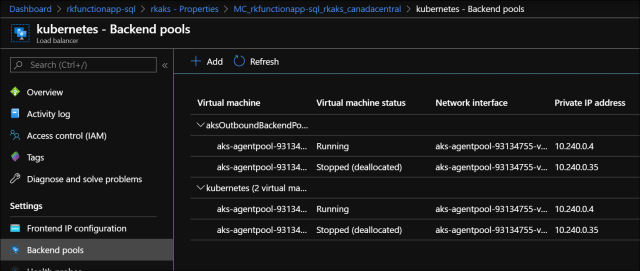

Load Balancer

Frontend IP where two public IP addresses are assigned. One of them is an outbound public IP.

Backend pools has both VM instances. One backend pool is for outbound traffic.

The load balancing rules apply to the two VM instances

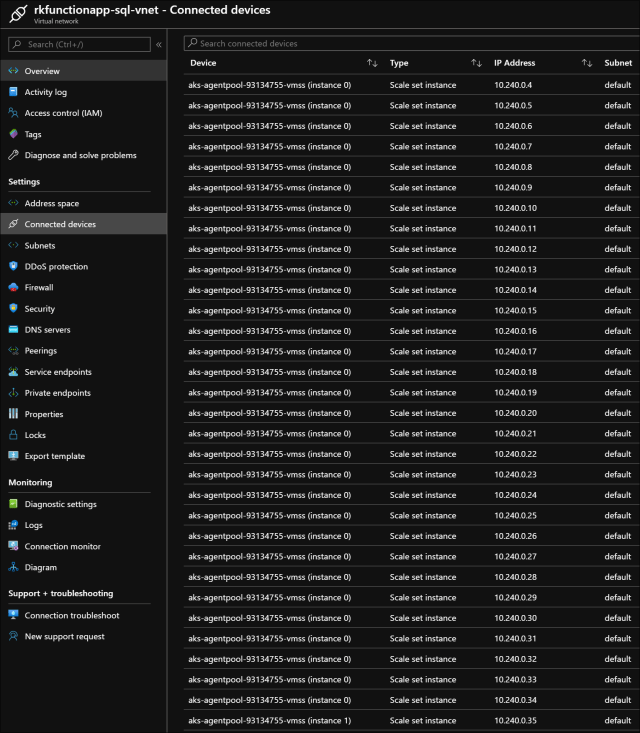

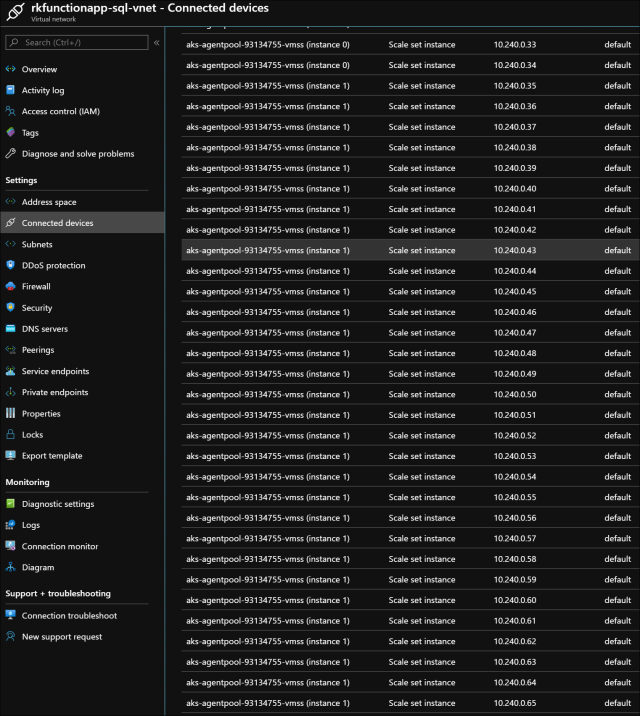

Virtual Network – Connected devices

Since the network model/plugin is Advanced with Azure CNI, the default 30 number of pods are assigned an IP address in the subnet.

Scrolling down the list, we see the 2nd VM instance in the scale set getting 30 IP addresses for its pods.

Using Helm, I deployed a NGINX ingress controller. The steps I referenced are Create an ingress controller. I also deployed an AKS hello world application.

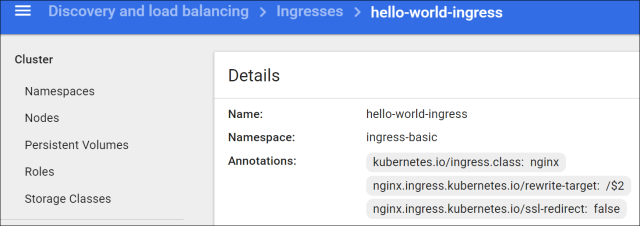

The Ingress Resource as shown in the Kubernetes Dashboard

The ingress resource yaml used to configure the http path against the Nginx ingress controller.

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: hello-world-ingress

namespace: ingress-basic

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/ssl-redirect: "false"

nginx.ingress.kubernetes.io/rewrite-target: /$2

spec:

rules:

- http:

paths:

- backend:

serviceName: aks-helloworld

servicePort: 80

path: /(.*)

- backend:

serviceName: ingress-demo

servicePort: 80

path: /hello-world-two(/|$)(.*)Testing the application

In summary, this configuration profile predominately shows the effects of using the Azure CNI plugin and what the virtual network settings look like. Note that the VM Scale Set doesn’t affect any load balancer but you can see how Pod IP addresses are assigned at each VM instances network interface card. This blog post can be a point of reference to see the resulting setup in screenshots and how the azure resources depend and relate to one another.

Read the next blog post in this series for Part 4 on Azure CNI & Http Application Routing (coming soon).

References

Pingback: Comparing Azure Kubernetes Networking Scenarios – Part 1 Intro – Roy Kim on Azure, Office 365 and SharePoint

Pingback: Comparing Azure Kubernetes Networking Scenarios – Part 4 Http App Routing – Roy Kim on Azure, Office 365 and SharePoint

Pingback: Comparing Azure Kubernetes Networking Scenarios – Part 5 Concluding Analysis – Roy Kim on Azure, Office 365 and SharePoint

Reblogged this on El Bruno.